Decades later, the time traveler, now an old man, confesses that his time machine never existed. His great invention was the story itself. It was his narrative, not the technology, that moved society forward.

Like the people in “The Toynbee Convector,”[1] we too find ourselves at a pivotal moment. The rapid growth of artificial intelligence (AI) has positioned us at a technological crossroad. For all the progress this technology promises to bring, there are just as many (or more), concerns about its potential harm. Whether you see AI as a force for progress or approach it with caution, its rapid pace forces us to think about the role we’d like it to play in our future. It’s worth asking how we can leverage this technology to build the future we want to create.

On the most basic level, generative AI, like ChatGPT and DALL·E, creates new content—like text, images, music, and code—by analyzing patterns it has been trained on. Drawing upon the data it has been fed, AI can write a movie script, write code, translate text into multiple languages, or plan a 5-day trip to Provence.

When OpenAI’s GPT-3.5 launched in November 2022, it became the fastest-growing consumer product in history, amassing over 100 million users in two months. Since then, venture capitalists, governments, and corporations have poured billions into generative AI, betting on its capacity to revolutionize everything from Hollywood, healthcare, business, education, and the arts.

AI proponents respond to criticism by pointing out that every new technological advancement has been met with skepticism and fear. But the radio didn’t break music, photography didn’t end painting, and calculators didn’t cause us to abandon math. With each leap, jobs are lost, but new jobs are also created. People have feared technology before, and we’ve continued to go forward.

And yet, AI feels different. It learns, evolves, and operates at a speed that’s both exhilarating and disorienting at the same time. Previous technological leaps have disrupted industries, but AI’s ability to advance at such a rapid pace makes it a uniquely unpredictable force. It’s accelerating in ways that would have seemed implausible just a decade ago.

AI’s fast-paced development brings forth a range of pressing questions: Will automation displace jobs faster than new ones emerge? How will AI-powered tools, such as facial recognition and data-mining algorithms, erode our privacy? Will AI systems amplify bias, leading to discriminatory outcomes in areas like hiring, law enforcement, and lending? Will tools that mimic creativity undermine human artistry and decision-making? What are the ethical risks if AI systems advance unchecked, eroding trust or deepening societal divides?

At the same time, if we start to think about what we know about creativity and storytelling and use that to channel AI to support projects that will be a net benefit to society, then it’s also possible that our ingenuity could grow exponentially too. But this potential relies on deliberate action.

“Move fast and break things” may have been Silicon Valley’s rallying cry for years, but as we’ve seen before with social media, the costs of moving too quickly can be steep. Ethical AI development requires foresight, collaboration, and a willingness to prioritize societal benefit over profit.

For that reason, this moment calls for careful decision-making about how we develop, deploy, and regulate AI. The choices we make today will determine whether AI becomes a tool for progress or disruption. Just as Bradbury’s fabricated future bred progress, AI has the potential to generate narratives that guide its own development and societal expectations around its use.

“When it comes to changing the values, mindsets, rules, and goals of a system, story is foundational.”

– Errol Arkilic, Chief Innovation Officer, UC Irvine

At UC Irvine, researchers and innovators are embracing this responsibility. They’re working to ensure that AI’s evolution is shaped by a commitment to societal benefit over financial gain. Instead of asking how AI can be faster or more profitable, UC Irvine faculty are asking how they can shape this technology to mirror the principles we stand by.

At the center of this vision is the NarrA.I.tive Story Studio, UC Irvine’s bold new initiative from Beall Applied Innovation (BAI). Set to be located at the Cove in UC Irvine Research Park, NarrA.I.tive will blend the art of storytelling with the technology of generative AI. It will serve as both a creative space and a community hub and will offer state-of-the-art tools like motion capture, AI-assisted video software, and high-powered workstations. This will enable students and creators to produce professional-grade work.

Errol Arkilic, UC Irvine’s Chief Innovation Officer, sees storytelling not just as entertainment, but a catalyst for change.

“Storytelling is a fundamental way humans process and share information,” Arkilic says. “When it comes to changing the values, mindsets, rules, and goals of a system, story is foundational.”

Stories help us give structure to our experiences, connect us to universal themes, and helping us make sense of complex emotions or events. They allow us to see patterns, draw lessons, and imagine possibilities. Through storytelling, we can explore questions about who we are, where we come from, and where we hope to be. NarrA.I.tive hopes to provide the physical space, tools, and community to allow these stories to grow.

Leading the NarrA.I.tive initiative is Stuart Mathews, Director, Industry Alliances at Beall Applied Innovation.

“The centerpiece of NarrA.I.tive is the AI Story Studio—a place where technology fuels human creativity to shape the future of storytelling. My dream is to empower creators with AI tools that enhance imagination, enabling stories that inspire, challenge, and transform the world. This is where innovation meets purpose, and where the next generation of professional and student storytellers will redefine culture in ways we’ve never seen before,” he says.

But the vision of NarrA.I.tive goes beyond just tools. Through events, panel discussions, and collaborative workshops, BAI seeks to spark dialogue and build community around ethics and AI. The goal of these gatherings is to unite leaders in AI, humanities, the arts, and industry to tackle pressing questions about AI and social responsibility.

Last year, BAI and the School of Humanities co-hosted AlphaPersuade: A Summit on Ethical AI. The conference is a good example of the types of events NarrA.I.tive hopes to host in the future. AlphaPersuade brought together industry leaders and academics from across disciplines to tackle the ethics of using artificial intelligence for the purpose of persuasion. The discussion centered on AI’s persuasive power and its ability to influence, manipulate, and alter fundamental beliefs.

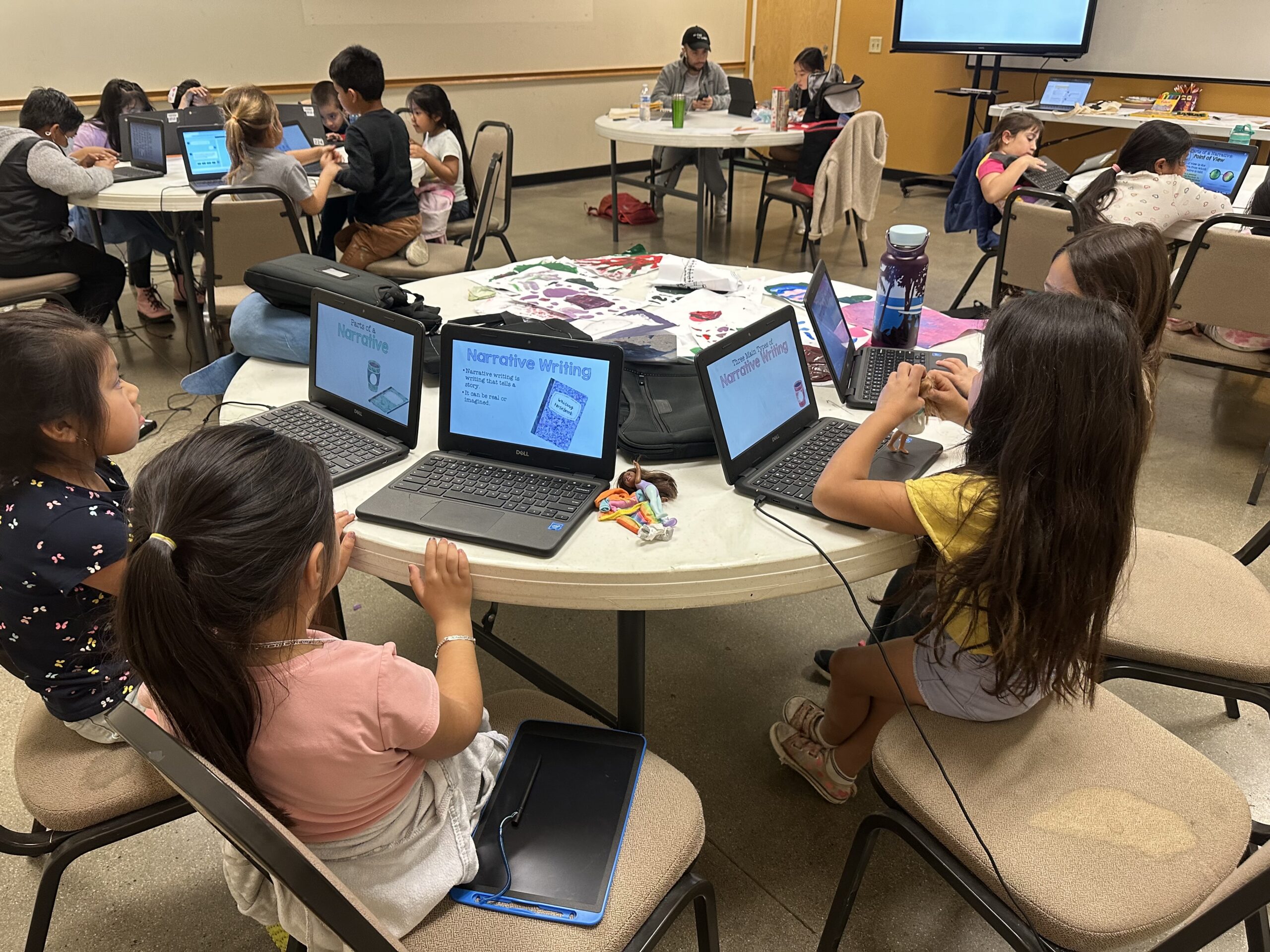

At UC Irvine, ideas don’t just spark discussions—they drive real-world solutions. Leading the way, informatics and education professor Kylie Peppler teamed up with graduate student Ariel Han to launch the innovative platform StoryAI. This innovative startup uses generative AI to craft culturally relevant narratives, helping students improve their writing skills.

StoryAI guides students step by step through the writing process, from choosing a genre to creating characters and receiving feedback. If they get stuck, the platform offers creative prompts to spark ideas. It helps students write stories that are personal and tied to their own culture, language, and interests. For younger students, StoryAI provides an option to draft stories by speaking, helping them gain confidence as they improve their literacy. Unlike tools like ChatGPT and Claude, which write for users, StoryAI encourages students to think and create stories on their own.

“We don’t want an AI that takes away the learning opportunities,” says Peppler, who also directs Creativity Labs and co-directs the Connected Learning Lab on campus. “We want an AI that gets you excited to learn, encourages you to iterate, and helps you produce better-quality work.”

The idea for StoryAI emerged from a common struggle. As a non-native English speaker, Han faced challenges writing her dissertation while helping her daughter with school writing assignments. Recognizing an opportunity, Han and Peppler used generative AI to bridge this gap. StoryAI now supports over 100 languages, enabling translation and seamless switching between languages within a single story. It also tracks how much of the work is done by the student versus the AI, gradually stepping back as the student’s writing skills improve.

“We want students to be able to express themselves in whatever ways they can and feel that they have a seat at the table,” Peppler says.

What excites Peppler and her team most about StoryAI is hearing students call it their “writing buddy.”

“They’re really enjoying the process,” Peppler says. “I love hearing them chuckle about what they’re writing and at the images their words bring to life.”

Peppler and Han brought StoryAI to fruition with the help of BAI’s Proof of Product (PoP) grant. PoP grants are part of an initiative at UC Irvine to provide researchers with not only critical funding but also access to industry expertise—helping to bridge the gap between academia and the marketplace. In this way, BAI acts as a kind of broker, by connecting researchers to the right partners and investors, and vice versa, connecting partners and investors to the right researchers.

“Many brilliant ideas emerge within academia, but far too few ever make it beyond university walls to transform the world. The PoP grants do a good job of enabling this,” Peppler says.

Another AI project emerging from UC Irvine is An AI That’s a Better Friend, from Informatics professor Bill Tomlinson. It’s an advanced AI chatbot designed to create personalized and emotionally engaging interactions by forming lasting social memories. Unlike traditional chatbots, which forget past conversations and lack emotional continuity, this AI can remember relationships, experiences, and personal details over time, making interactions feel more meaningful. This addresses a major gap in AI interactions. Its potential impact on society is significant—especially in areas like combatting loneliness, mental health support, and preserving life stories of elders. By integrating social intelligence and memory into AI, An AI That’s a Better Friend has the potential to redefine how people interact with technology.

StoryAI and An AI That’s a Better Friend demonstrate how AI can be used not just as a tool for automation, but as a way to enhance human connection, creativity, and learning. But beyond these tools, the university is positioned to lead the way in cultivating the next generation of AI experts. With California home to industry giants like OpenAI, Google AI, Anthropic, and the entertainment industry, UC Irvine has a unique opportunity to educate the next generation of workers who will shape and guide these industries. The university is developing standalone courses, and is working on establishing a minor, and eventually a major that integrates computer science with humanities to promote a uniquely human-centered approach to AI development.

The future of A.I. is being drafted in real time. Whether you see artificial intelligence as the first step toward robot overlords or the engine that will catapult us into a golden age of technological progress, one thing’s for sure: we can’t look away. Artificial intelligence is already shaping our daily lives, and its influence will only grow larger, faster, and more pervasive. The decisions we make today—about ethics, safety, and who gets to decide what progress means—are pivotal. They will determine whether AI becomes a tool of empowerment or leaves us feeling like uneasy passengers on a ride we never agreed to take. If we don’t take a vested interest now, we may risk losing our say in where the tracks lead.

The future of artificial intelligence is still unwritten, but the stories we tell ourselves about it—of innovation, of ethics, of creativity—will shape how we get there. Peppler, whose work revolves around fostering creativity through A.I., sees storytelling as a critical part of this journey.

“We want to think about how AI can support our creativity, learning, and this next chapter of possibility,” she says.

Bradbury’s time traveler’s story of a better world gave people something tangible to strive for. It was a story powerful enough to shape reality. The same could be true for artificial intelligence today. The future of AI is not inevitable, but ours to design. Through creativity, collaboration, and a commitment to ethics, BAI hopes that the stories we tell—and the futures we build—are worthy of the possibilities AI holds. Sometimes, imagining a better world isn’t just the first step. It’s the most important one.